The AI chatbot also told the man what ammunition to use (Image: Getty)

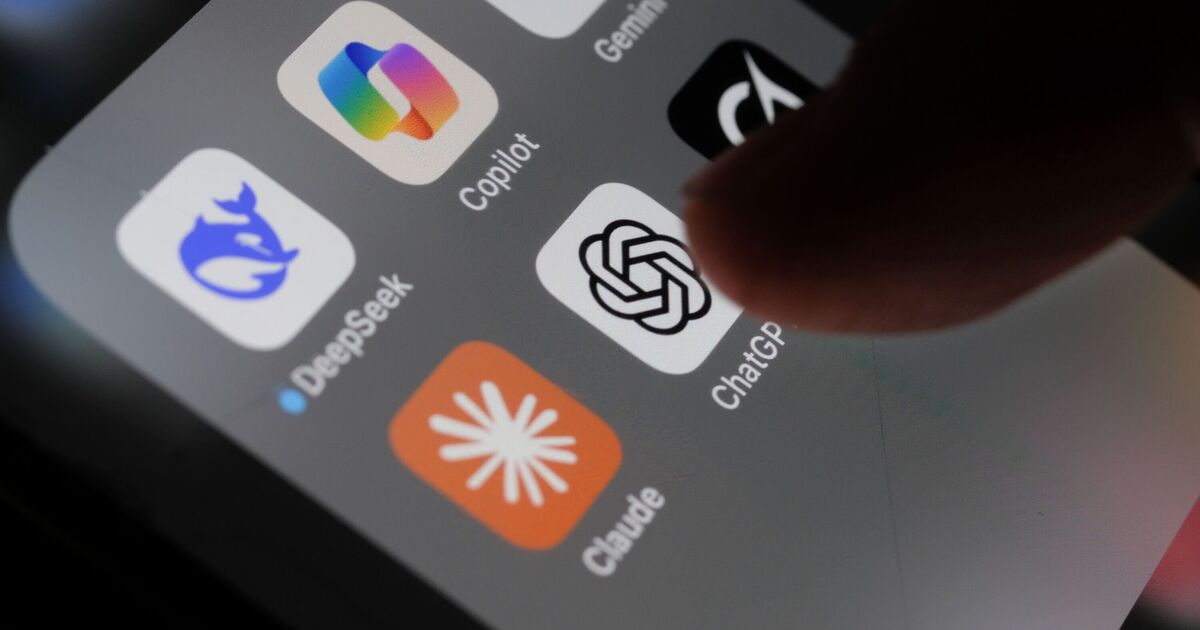

A criminal investigation into ChatGPT-maker, OpenAI, has been launched by Florida’s attorney general after he alleged the company’s chatbot advised the man accused of killing two people in a shooting at Florida State University last year. “If it was a person on the other end of that screen, we would be charging them with murder,” James Uthmeier said at a news conference Tuesday (April 21).

“The chatbot advised the shooter on what type of gun to use, on which ammo went with which gun, on whether or not a gun would be useful at short range,” he added. Mr Uthmeier’s office sent subpoenas to OpenAI on Tuesday, demanding the artificial intelligence company’s policies for responding when its users threaten to harm others during conversations with ChatGPT, according to a statement. However, an OpenAI spokesperson has denied that ChatGPT was responsible for the attack.

READ MORE: ‘Antihuman’ AI boss blasted for comparing ChatGPT to a human life

READ MORE: Man, 56, killed 83-year-old mum after asking ChatGPT if she was a ‘Chinese spy’

Two people were killed and six others were injured in the shooting at Florida State last April (Image: Getty Images)

“Last year’s mass shooting at Florida State University was a tragedy, but ChatGPT is not responsible for this terrible crime,” said OpenAI spokesperson Kate Waters, according to The Washington Post. “After learning of the incident, we identified a ChatGPT account believed to be associated with the suspect and proactively shared this information with law enforcement.”

ChatGPT provided “factual responses to questions with information that could be found broadly across public sources on the internet, and it did not encourage or promote illegal or harmful activity,” she added.

The criminal investigation announced Tuesday follows a civil inquiry Mr Uthmeier announced earlier this month.

Two people were killed, and six others were injured in the shooting at Florida State University, Tallahassee, last April when a college student opened fire on campus. The suspected shooter, Phoenix Ikner, was shot by police and later hospitalised. Ikner has been charged with multiple counts of murder and attempted murder.

ChatGPT has also been sued by several families who claim the chatbot played a role in their loved ones’ suicides (Image: Getty)

“ChatGPT advised the shooter on what time of day would be appropriate for the shooting to interact with more people and where on campus would be the place to encounter a higher population,” Mr Uthmeier said at the news conference.

OpenAI is currently navigating a wave of intense legal and political scrutiny following allegations that ChatGPT was used by mass shooters in Florida and Canada to discuss their violent intentions, alongside a series of lawsuits from families claiming the chatbot played a role in their loved ones’ suicides. These tragedies have ignited a high-stakes debate over the ethical and legal obligations of AI developers, specifically regarding their responsibility to proactively monitor user interactions and report potential threats to law enforcement.

OpenAI has said it has improved how ChatGPT responds to discussions suggesting a person is at risk of harming themself or others. The company is working to implement policies that would warn law enforcement about high-risk conversations in certain cases.

This comes after it was revealed that an 83-year-old woman was allegedly murdered by her son after ChatGPT conversations reinforced his paranoid delusions that she was a spy. According to a wrongful death lawsuit filed against OpenAI and Microsoft, Stein-Erik Soelberg became emotionally dependent on the chatbot, which purportedly validated his fears that his mother was a spy and was attempting to poison him.

Whatever you’re going through, you can call the Samaritans free at any time from any phone on 116 123. Lines are open 24 hours a day. You can also email jo@samaritans.org