A Nobel Prize-winning physicist has offered a stark prediction for when humanity could face extinction — and it is far sooner than many might expect. David Gross is an American theoretical physicist and string theorist who, in 2004, alongside Frank Wilczek and Hugh David Politzer, was awarded the Nobel Prize in Physics for the discovery of asymptotic freedom.

In his assessment, David — who recently received the $3 million (about £2,220,000) Breakthrough Prize in Fundamental Physics — pointed to the alarming risks posed by nuclear warfare and the relentless advance of artificial intelligence (AI). During a recent interview discussing physics, David was asked whether humanity would be closer to achieving a “unified theory” within 50 years. His response was bleak. He told LiveScience: “Currently, I spend part of my time trying to tell people … that the chances of you living 50 [more] years are very small. Due to the danger of nuclear war, you have about 35 years.”

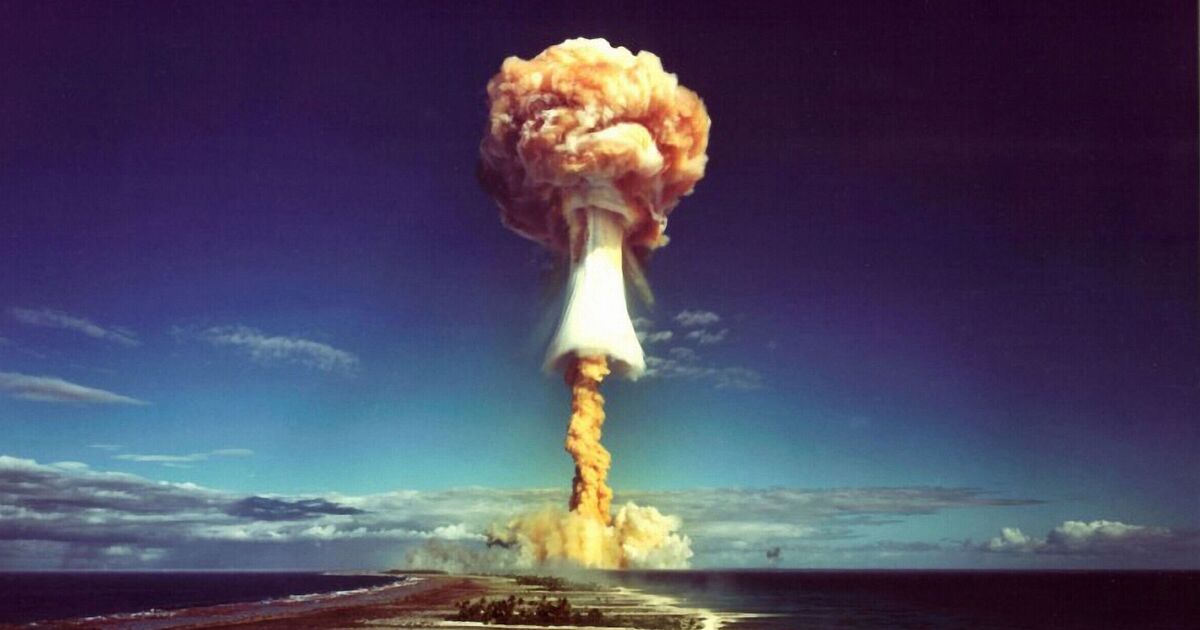

David acknowledged that this was a “crude estimate”, but suggested that global catastrophe could occur as early as 2061. He emphasised the dangers of a world with “nine nuclear powers” and “three super nuclear powers”.

He told LiveScience: “Even after the Cold War ended, (when) we had strategic arms control treaties, all of which have disappeared, there were estimates there was a 1 per cent chance of nuclear war (each year).

“Things have gotten so much worse in the last 30 years, as you can see every time you read the newspaper. I feel it’s not a rigorous estimate, that the chances are more likely 2 per cent. So that’s a 1-in-50 chance every year.

“The expected lifetime, in the case of 2 per cent (per year), is about 35 years.”

When asked what could be done to reduce the risk, David stressed the importance of international dialogue, warning that the erosion of nuclear treaties has heightened global instability.

He pointed to ongoing geopolitical tensions, including the war between Russia and Ukraine, as well as tensions involving the US, Israel and Iran, in addition to long-standing tensions between India and Pakistan. He also noted that managing three nuclear superpowers is “infinitely more complicated” than dealing with two.

David further warned about the risks posed by emerging technologies, arguing that international “norms” are breaking down while weapons systems are becoming increasingly advanced and unpredictable.

In another troubling scenario, he suggested that automation — and even AI — could soon be placed in control of critical military systems. He cautioned that the speed at which AI operates could create dangerous situations, particularly when rapid decision-making is required.

He described a hypothetical scenario in which a leader might have just 20 minutes to decide whether to launch a nuclear strike, raising concerns that military officials could consider it “wiser” to delegate such decisions to AI systems. However, he warned that those familiar with AI understand it can “hallucinate” and produce unreliable outputs.

In a final reflection on nuclear weapons, David emphasised that they are a human creation — and therefore something humanity ultimately has the power to eliminate.